Introduction to Sorting algorithm: Insertion and Selection Sort

What does sorting mean?

Sorting algorithm specifies the way to arrange data in a particular order. Most common orders are in numerical or lexicographical order. In general, the organization of different objects in order on the basis of size, color, shape, etc are referred to be sorted.

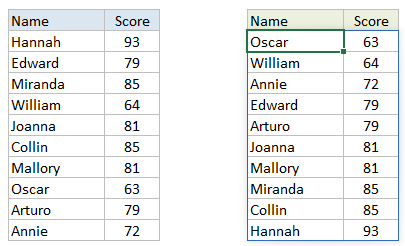

Example - Sorting of a table on the basis of score

Why Sorting?

Sometimes in real world applications, it is necessary to arrange the data in a sorted order to perform searching and other operations efficiently. Sorting is also a famous problem solving approach during the interview.

What is a sorting algorithm?

Sorting algorithms take lists of items as input data, perform specific operations on those lists and deliver ordered list as output. Applications of sorting algorithms include organizing items by price on a retail website and determining the order of sites on a search engine results page.

We will be discussing two fundamental sorting algorithms here:

- Selection sort

- Insertion sort

1. Selection Sort Algorithm

Solution Idea

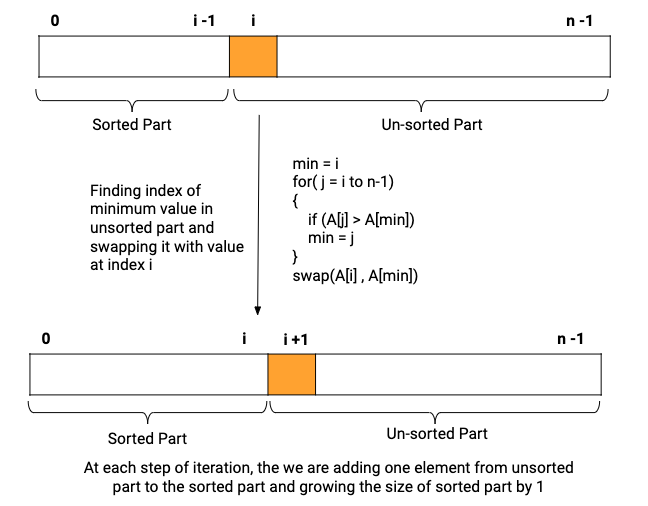

This is an in-place comparison-based sorting algorithm. During the selection sort algorithm, the array or list is divided into two parts: the sorted part at the left end and the unsorted part at the right end. Initially, the sorted part is empty and the unsorted part is the entire array or list.

Initially, the smallest element is selected from the unsorted array and swapped with the leftmost element, and that element becomes a part of the sorted array. This process continues to add one input from the unsorted part to the sorted part. (Think!)

Visual representation of selection sort

Solution Steps

Iterate over the input array from i = 0 to n -1 and repeat the following steps until the list is sorted:

- Initialize a variable minIndex = i

- For every index i, run a loop from j = i to n - 1 to find the index of the minimum value in the unsorted part

minIndex = i

for( j = i to n-1)

{

if (A[j] > A[minIndex])

minIndex = j

}- Swap value at location i with the value at location minIndex

- Increment i to point to the next element

Here is the visualization of the general step of the loop:

Pseudo Code

void selectionSort(int A[], int n)

{

for(int i = 0 to n-1, i = i+1)

{

int minIndex = i

for(int j = i to n-1, j = j+1)

{

if(A[j] < A[minIndex])

minIndex = j

}

swap(A[minIndex], A[i])

}

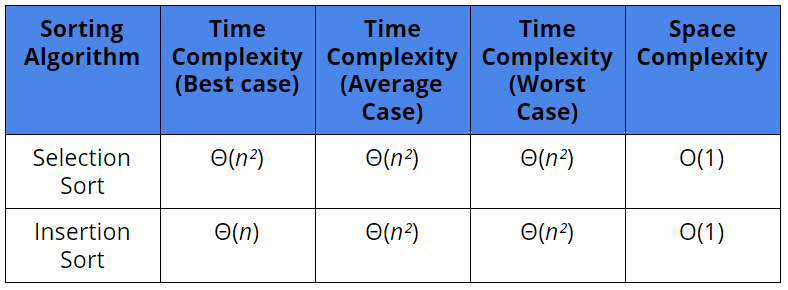

}Complexity Analysis

We are running two nested loops and performing the comparison and swapping operation to sort the input. Let's count both operations in the worst case:

for i = 0, n comparison

for i = 1, n-1 comparison

and so on.

Total number of coparison = n + n-1 + n-2 +......1 = n(n+1)/2

Similarly, total number of swapping = n (Think)Here the comparison is a major operation. Time Complexity = O(n²)

Let’s see how the Θ( n² ) running time affects the actual execution time. Let’s say that selection sort takes approximately n²/10⁶ seconds to sort n values. When n = 100, then running time is 100²/10⁶ = 1/100 sec. When n = 1000 then 1000²/10⁶ = 1. If the size of the input grew by a factor of 10 then the running time will increase 100 times.

Space Complexity = O(1)

Critical Ideas to Think

- How we design the selection sort algorithm for a linked list? What would be the time complexity in this case?

- Can we optimize the above code further?

- What are the best case and worst case time complexity of the selection sort? Do you think the number of comparisons affects the running time of the algorithm?

- Compare the time complexity of the selection sort and the other sorting algorithms? Which one looks best?

- Think of a real-life example when you arranged your things following a selection sort algorithm!

2. Insertion Sort Algorithm

Solution Idea

This is also an in-place comparison-based sorting algorithm. During the insertion sort algorithm, the array or list is divided into two parts: the sorted part at the left end and the unsorted part at the right end.

At each step of the iteration, we are adding one value from the unsorted part to the sorted part. or we can say that our size of the sorted part is growing by 1 after each step of iteration. Here we pick the first element of the unsorted part and find its correct place to insert in the partially sorted array.

This is an incremental design approach to solve the problem in algorithms where the partial solution is growing after each step of the iteration.

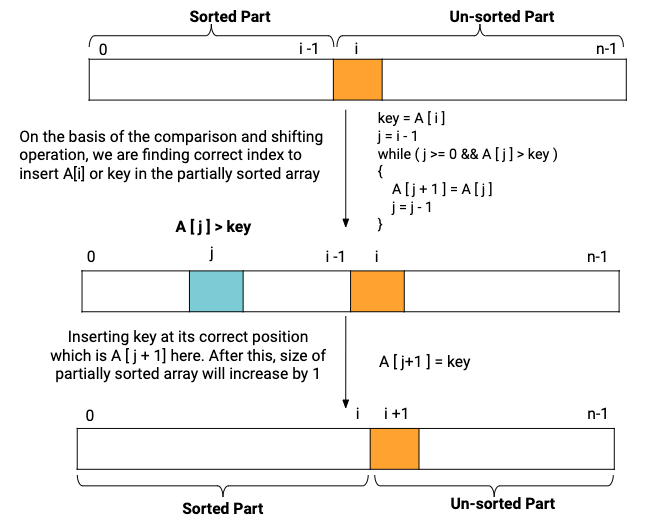

Visual representation of Insertion sort

Solution Steps

Iterate over the input array from i = 1 to n -1 and repeat the following steps until the list is sorted:

- Initialize a variable key and j (Think!)

key = A[i]

j = i - 1- For every index i, run a loop from j = i-1 to 0 and find the correct index to insert the value key in the partially sorted array.

while (j >= 0 && A[j] > key)

{

A[j + 1] = A[j]

j = j - 1

} - After the inner while loop, j + 1 will be the correct position of the key in the partially sorted array. Insert key at A[j+1] i.e. A[j+1] = key

- Move to the next element by incrementing the value of i.

Here is the visualization of the general step of the loop:

Pseudo Code

void insertionSort(int A[], int n)

{

for (int i = 1 to n-1; i = i+1)

{

int key = A[i]

int j = i - 1

// Shift elements of A[0..i-1], that are //

// greater than key, to one position ahead of their current position //

while (j >= 0 && A[j] > key)

{

A[j + 1] = A[j]

j = j - 1

}

A[j + 1] = key

}

} Complexity Analysis

We are running two nested loops and performing the comparison and shifting operation to sort the input. Let's count both operations in the worst case and the best case:

Worst case scenario: If our input is reversely sorted, then the insertion sort algorithm performs the maximum number of operations (Think!)

for i = 1, 1 comparison and 1 shift operation

for i = 2, 2 comparison and 2 shift operation

and so on.

Total number of coparison operation = 1 + 2 + 3 +......n-1 = n(n-1)/2

Total number of shifting operation = 1 + 2 + 3 +......n-1 = n(n-1)/2Worst case time complexity = O(n²)

Best case scenario: If our input is already sorted, then the insertion sort algorithm perform the minimum number of operations. (Think!)

for i = 1, only 1 comparison

for i = 2, only 1 comparison

and so on...

Total number of coparison operation = n-1

Total number of shifting operation = 0Best case time complexity = O( n )

We are not using any extra space to perform sorting. Space Complexity = O(1)

Critical Ideas to Think

- For almost sorted input, insertion sort works best.

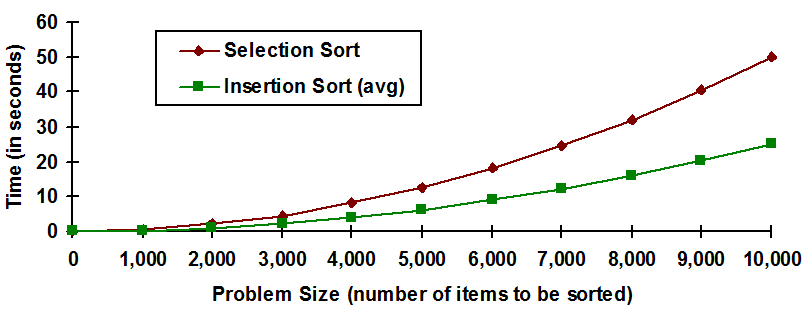

- Do you think that insertion sort is better than selection sort?

- How can we optimize the above code further?

- How we design the selection sort algorithm for a linked list? What would be the time complexity in this case?

- Selection sort is also an example of an incremental design approach . Prepare a list of problems where this approach can be used for the solution.

- Do you think that the relative order of items with equal keys does not change using the insertion sort algorithm?

- How we analyse the average case time complexity of the insertion sort algorithm? is it less than O(n^2) time complexity?

Why choose insertion or selection sort over O(n*logn) algorithms?

For small arrays (less than 20–30 elements), both insertion sort and selection sort are typically faster than the O(n*logn) alternatives. In fact, many sorting algorithms based on the divide and conquer paradigm switch to insertion sort or selection sort when the array is small enough.

Comparison between Insertion and Selection Sort

The selection sort always requires exactly

(n

²

+ n)/2

comparisons to sort n

items. In the worst case, an insertion sort requires

(n

²- n

)/2

. So, given any non-empty list, insertion sort will always perform fewer comparisons than selection sort.

Please comment down below if you find an error/bug in the above explanation. Happy Coding, Enjoy Algorithms!